One of the fund's principals had worked with Whitespectre as a key stakeholder at his previous company. When he saw the gap between what the fund's AI experiments could do and what they needed to actually rely on, he brought us in to make it production-grade.

Decomposing the evaluation into agents.

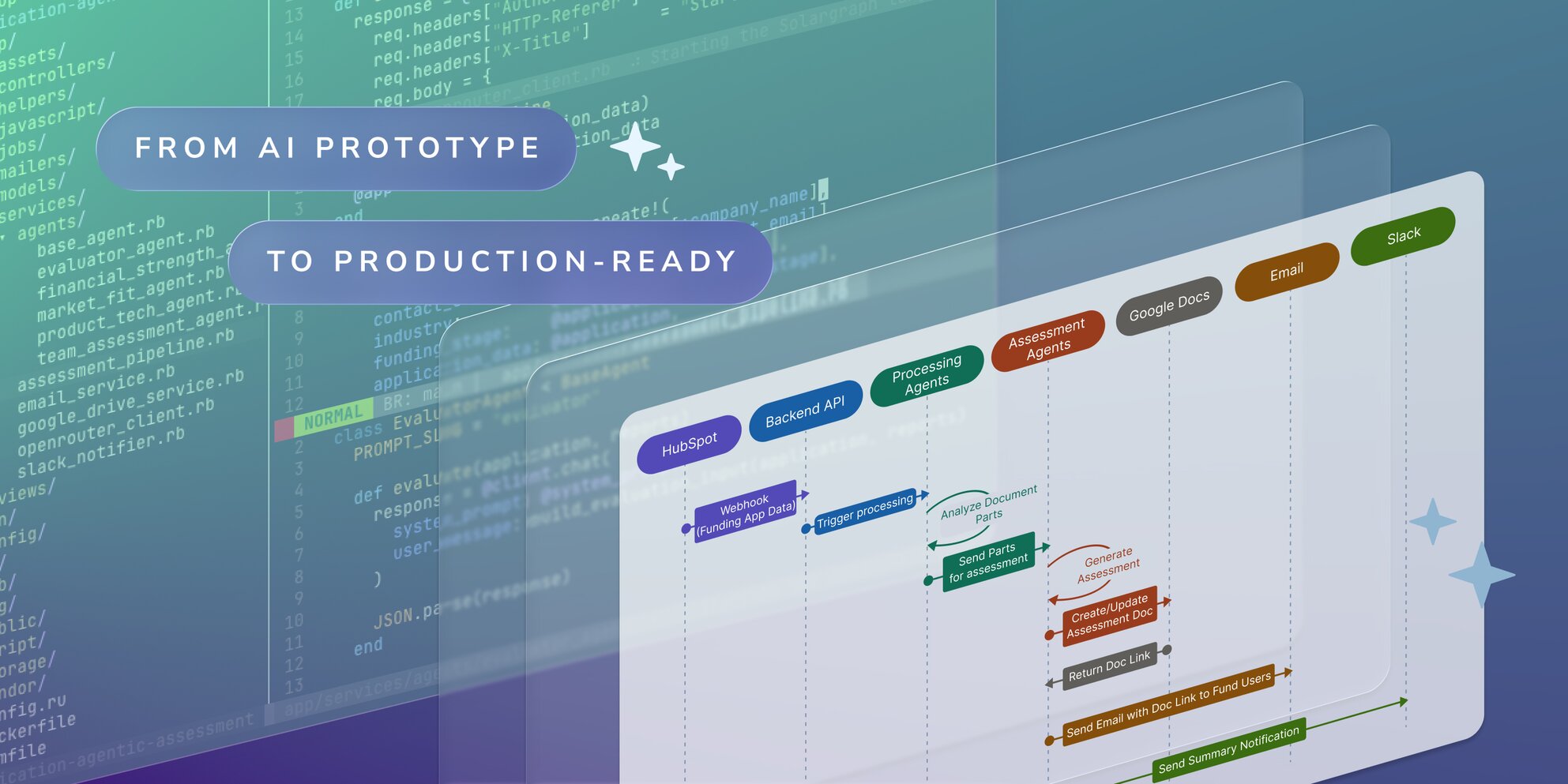

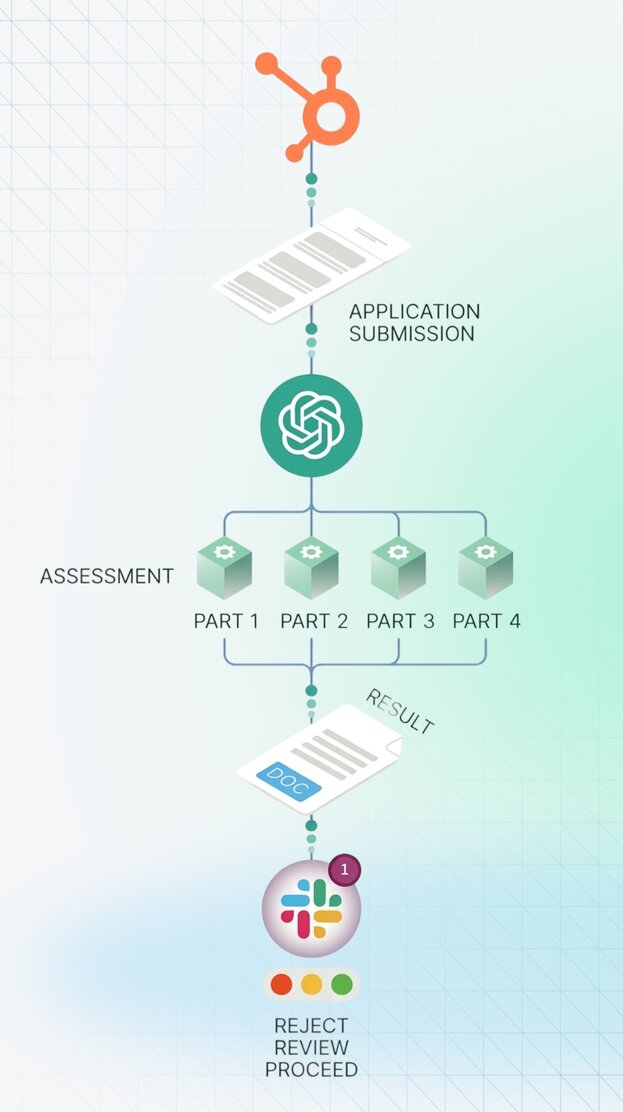

The fund's assessment criteria covered roughly 30 data points across four groups - financial feasibility, business model fit, market positioning, and overall viability. Rather than feeding everything into a single prompt (which produced inconsistent results and made failures impossible to isolate), we broke the evaluation into specialized agents. Each agent handles one criteria group, analyzing the pitch deck and supporting documents against specific assessment dimensions. A consolidation agent then weighs all outputs and produces the final report.

This mirrors a pattern we've applied across AI projects: smaller, focused agents with cleaner context windows produce more reliable outputs than monolithic prompts - and when something goes wrong, you know exactly where to look.

Structured, usable output.

The pipeline doesn't produce a thumbs-up or a score in a spreadsheet. It generates a full Google Doc - formatted to the fund's existing template - with scored assessments for each criteria group, supporting analysis, and a consolidated recommendation. An experienced partner can open that doc and make a decision in minutes rather than building the analysis from scratch.

Alongside the doc, a Slack notification fires with a short summary, a link to the report, and a green/yellow/red signal - telling partners at a glance whether to jump on a pitch immediately, schedule a review, or move on.

Integration without infrastructure overhead.

We connected the pipeline using Zapier, linking the fund's existing HubSpot submission form to the AI evaluation engine, Google Docs output, and Slack notifications. No new infrastructure, no complex deployment. This kept the project fast and meant the system could go live against real pitches immediately - which was critical for validating the AI's judgment against the team's own assessments.

Built for the fund to own.

The architecture was deliberately model-agnostic. The fund can swap in newer AI models as they become available - important in a space where model capabilities are improving month over month. More importantly, evaluation criteria and prompts are editable by the fund's assessment team directly, without touching code. As their investment thesis evolves, the assessment evolves with it.